Facebook Gets Serious About Fake News

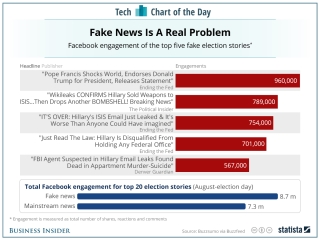

Facebook is getting serious about addressing fake news on its site. The issue came to a head when an armed man fired a shot in a pizzeria, believing a story about Hillary Clinton ran a child sex ring there.

Facebook is getting serious about addressing fake news on its site. The issue came to a head when an armed man fired a shot in a pizzeria, believing a story about Hillary Clinton ran a child sex ring there.

First, Facebook is partnering with outside fact checkers ABC News, The Associated Press, FactCheck.org, Politifact, and Snopes, who will review a story identified as possibly untrue. Stories that don't pass muster will be flagged as "disputed," will show lower down on a newsfeed, and will carry another warning if people want to share it.

In a post on its website, Facebook explained the changes in text, with images from the app, and in this video.

Although Mark Zuckerberg has downplayed the issue as only 1% of Facebook posts, he clearly see the company's responsibility. He wrote a post of his own to explain how they're taking action, and the company's post ends, "It's important to us that the stories you see on Facebook are authentic and meaningful. We're excited about this progress, but we know there's more to be done. We're going to keep working on this problem for as long as it takes to get it right."

Discussion:

- What's your view of this approach? Will these strategies work? What else, if anything, should they do?

- How well is Facebook communicating these changes? Review the blog post text, images, and video.