Instagram's New Bully Filter

Instagram has implemented a new technology that will not display comments considered bullying. The program, run by artificial intelligence (AI) technology, can detect “offensive and spammy” comments in English and in at least eight additional languages. Although the filter is set by default, users can "opt out" if they want to see such comments, or they can include specific words to screen out.

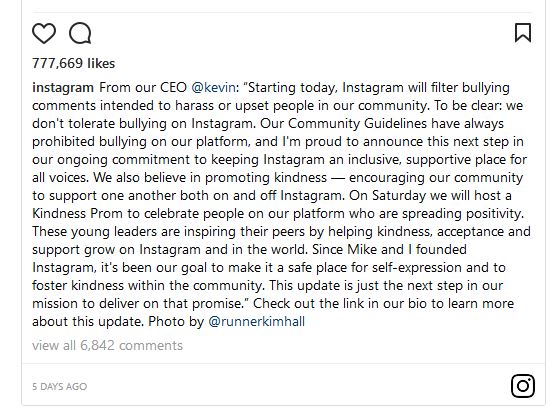

In an Instagram post, shown here, and in a longer post titled "Protecting Our Community from Bullying Comments," CEO and Co-Founder Kevin Systrom promised more diligence, particularly to protect young users:

We also believe in promoting kindness — encouraging our community to support one another both on and off Instagram. On Saturday we will host a Kindness Prom to celebrate people in our community who are spreading positivity. These young leaders are inspiring their peers by helping kindness, acceptance and support grow on Instagram and in the world.

Research shows the danger of online bullying: of 2,000 middle schoolers in the study, those who experienced cyberbullying were twice as likely to attempt suicide than those who did not experience cyberbullying.

Discussion:

- Analyze Instagram's announcement of the filter. Who are the audiences, and what are the communication objectives? How well does the message achieve those objectives?

- What's your view of Instagram's response to the problem of cyberbullying? Are the company leaders doing enough, or should they do more?

- How does this news relate to the leadership character dimension vulnerability?